Flipper.enable(☁️)

Even when you have a fully functioning product, there are a few hurdles to launching it. Read on to learn how we tackled them.

I previously wrote about why I was working on Flipper Cloud. Since then, everything has changed.

We bought Speaker Deck back from GitHub. My wife and I had another child. Microsoft acquired GitHub. I left GitHub. I joined Box Out Sports. Steve and I launched Speaker Deck Pro. It has been a busy two years!

And I'd be remiss not to mention the biggest change of all – the pandemic.

All of these things led to Flipper Cloud getting pushed to the side. I mean, it was running and working great.

We used it on both Box Out and Speaker Deck. But no one else could. Well, save the lucky few who guessed the magic word. Haha!

Following the release of Speaker Deck Pro, Steve and I knew it was Flipper's turn. Seeing people give us their hard earned money for Speaker Deck was inspiring. Having a fully functional product for 2 years that no one can pay for is stupid.

But launching a product is no easy thing.

The First Hurdle: Billing

The first thing preventing Flipper from launching was a billing system. Turns out if people can't pay you, you don't make any money.

Thankfully, Stripe makes that really easy these days – especially with their new-ish billing portal.

Steve completely rewrote the Box Out billing system in May 2020. And he was fresh off integrating Speaker Deck with Stripe in December 2020. So we were confident we could have something solid in place in a few days.

Before you can bill though, you must price.

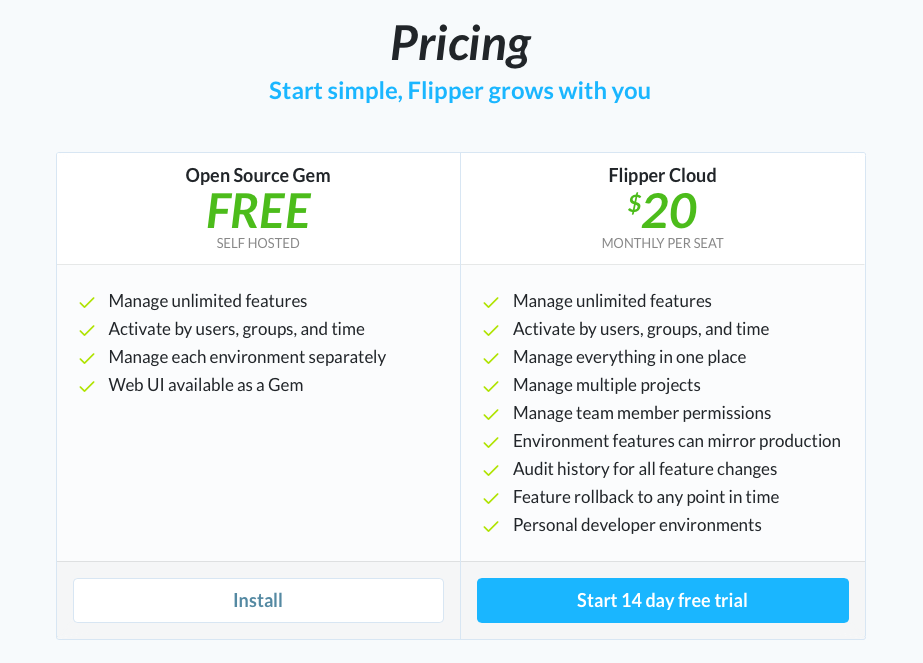

The Second Hurdle: Pricing

More difficult than naming is pricing. Steve and I have always preferred simple pricing.

- Whole numbers like $30 instead of $29.99.

- A few tiers of no-nonsense pricing and you can 🍌 out.

- Limits for tiers based on a value metric.

- General disdain for per seat pricing.

Simple tiers worked for Harmony. They worked for Gauges. They are currently working for Box Out and Speaker Deck.

Tiers and a value metric

We started with this tiered pricing model for Flipper. But the first question was what is the value metric?

We didn't want to limit projects, environments or features in anyway, as doing so could encourage awkward use of the product (like using one project for all your projects). We want the product to always feel smooth.

Additionally, we've noticed the more developers you have using feature flags, the more valuable they become. Ding! We saw this during our time at GitHub. Value metric chosen – number of developers.

So we started with 3 plans based on the number of developers (value metric):

- $50 - 2 developers

- $200 - 10 developers

- $400 - 20 developers

But it didn't feel right

The problem with this was $0 (open source) to $50 is a big leap. So was $50 to $200 and $200 to $400 for that matter.

For the same reasons we didn't want to limit features or projects, we didn't want customers to avoid adding a developer because it would tip them into a much larger plan.

- Our goal isn't to become a 🦄. Been there, done that.

- Our goal isn't to squeeze as much 💰 as we can from our customers.

- Our goal is to provide value to customers that makes it worth it for them to pay us and worth it for us to do so. That's it.

Per seat... really?

We brainstormed for a while and decided per seat pricing actually makes a lot of sense for Flipper. Shocking. I never thought I'd read those words written from my weak little programmer fingers 👐.

The value metric is number of developers. More developers for the customer is likely tied very closely to more revenue or funding. As the customer grows, we grow with them. If they shrink, the value they get from us and the cost to them does as well.

It just made sense.

- Per seat pricing avoids the big jumps from tier to tier.

- Per seat pricing ties the value to the cost for the customer.

Market Research

Now the question was, how much per seat? We looked around at the market – Launch Darkly, Split, Rollout, Configcat, Prefab, Ajustee, Unleash and Switchover to name a few.

The common theme was $10 - $90 per seat. The products on the higher end of that range were typically also tied to MAU (monthly active users aka actors in Flipper's terms).

Tying to MAU makes a lot of sense as well. If you have more actors that you are checking flags for, you likely are receiving more value by using flags. We don't currently track MAU's and thus don't provide any analytics (aka value) on that data.

We only charge for the value we provide. Since we don't provide analytics yet, there was no reason to try to price in the upper range.

Additionally, we want using Flipper Cloud to be a no brainer, especially for those already using open source Flipper.

We oscillated between $10 to $30 per seat and finally just split the difference – $20 per seat. I'm pretty happy with where we ended up.

But no one will buy, if they don't trust you.

The Third Hurdle: Trust

In talking with people about Flipper Cloud, the first thing that always came up was: ...but what happens if you are down, will my app be down?

This is a great problem! Its not a you don't provide any value problem. Or a you are too expensive problem.

This is an I want to use Flipper Cloud, but I want to know it won't hurt me problem. Making software not hurt you is a specialty of ours.

Before I answer the what happens if you are down question, let's dive into some background.

One Feature Check, One API Request

The very first way that the Flipper::Cloud client could work is every time a feature check happens, it makes a request to the Flipper Cloud API to say "hey is this feature enabled for this actor?".

- If we were unavailable, your app would be unavailable.

- If we were available and you were doing more than a single feature check, your app would be slow. Real slow.

Nope. No way that would ever work. I worked on GitHub.com, one of the most trafficked sites in the world, for nearly 7 years.

The majority of my time at GitHub was focused on availability and resiliency. I would never ship something like the aforementioned to the world. That is just asking to tick customers off.

One Request in Your App, One API Request

The great thing about (open source) Flipper is that it is designed to be as performant as you need. It can preload all the data required to check a feature for any actor, typically in one or two network calls.

With a single middleware addition, Flipper can preload all feature data for a request/job in your application. This means you can say "hey is this feature enabled for this actor?" as many times as you want on the page and no additional API requests will be issued.

- If we were unavailable, your app would be unavailable.

- If we were available, no matter how fast your application was, it would always have a base response time of however long it takes for your app to request feature data from our API.

Nope. No way. This would make a 1 to 1 correlation between your traffic and our API traffic. I'm never a fan of tying things together like that.

My goal is always to make things as constant as possible regardless of throughput.

One API Request per N seconds per thread (or process)

The next step up in efficiency was to move the request on every page load to one request every N seconds per process per thread. This is far from ideal, but we actually used it on Box Out and Speaker Deck for more than a year.

Flipper is built on adapters. And the adapters are very composable/layerable.

At GitHub, we used a MySQL adapter, wrapped by a Memcached adapter. This meant all reads first went to memcached and then only to mysql if the cache wasn't populated.

GitLab does something similar. Their Flipper instance caches in memory, falling back to redis which falls back to Active Record.

This adapter layering is also how the per request memoization I talked about before works. I created a memoizing adapter and a middleware that ticks it on and off per request. Anytime you use Flipper.new(adapter), I wrap the adapter you provide with the memoized adapter, so you don't have to.

Knowing how adapters work in Flipper (I mean I wrote them right? 😆), I created a sync adapter that worked with a local and a remote. It defaulted to synchronizing on an interval to keep the local in sync with the remote.

This moved Flipper::Cloud from making API requests on every request in your app to one API request every N seconds per process/thread in your app. More processes in your app meant more time in your requests talking to our API.

- If we were unavailable, you only experienced slower requests every N seconds per process. We also stored the data in your app, so feature check reads still worked.

- If we were available, you'd see one request to Flipper per process per thread (if using threads).

Much improved. Still, anyone who has worked on a high throughput app knows that this isn't a good idea long term.

One API request per N seconds

The next improvement was a rake task. Ugh. Yuck. But it worked great! I moved Box Out to use the ActiveRecord adapter in production and added a rake task to sync every N seconds.

The rake task configured flipper to use Flipper::Cloud with the same Active Record adapter. Every N seconds the rake task would run, Cloud would realize it had not synced and would do so.

- If we were available, your app would just make one request every N seconds.

- If we were unavailable, your app would remain available as all reads go to the local to your app adapter of your choosing (Active Record, Redis, Mongo, whatever).

This solved the performance problem of making requests to the Flipper Cloud API in your app's request lifecycle. But there were a couple of downsides:

- You needed a separate process to perform the syncing.

- You had to wait for feature changes as syncing only happened every N seconds.

From Pull to Push

I knew we needed to move from pull (all of the aforementioned stuff) to push in a way that isolates your availability from ours.

My goals were:

- fast enough sync that it feels instant (aka < a few seconds).

- no separate process for you to run.

- minimal impact to performance.

At some point, it occurred to me that webhooks could likely meet all of those goals.

- Webhooks feel instant. Yes, our workers delivering the webhooks could get behind, but the common case is the webhook is delivered faster than you can go back to your app in the browser and refresh.

- Webhooks can be mounted in your web app and thus avoid needing a separate process.

Flipper::Cloudnow ships with a rack app you can mount in Rails or withRack::URLMapin any rack based application (e.g. sinatra, hanami, or roda). - Webhooks minimize performance impact to your application. They isolate your applications need to talk to Flipper Cloud to a single request in your app that is receiving the hook – just a conversation between machines.

I built the webhook system and switched over Box Out and Speaker Deck without issues. Our pre-launch people switched over without issues as well.

Webhooks have made syncing changes fast, improved customer app performance and dramatically reduced the number of API requests our app has to serve. Win, win, win.

They are also really simple to use. For example, this is how they get mounted in a Rails app:

SpeakerDeck::Application.routes.draw do

mount Flipper::Cloud.app, at: "_flipper"

end

The example above creates an endpoint that you can add as a webhook in Flipper Cloud (https://yourdomain.com/_flipper/webhooks). If you want to see it working end to end, check out this one minute screencast I whipped up.

The Hurdles are Hurdled

At this point, we had a piece of software that:

- had a price.

- people could pay for.

- people could trust.

All that was left was to enable the subscriptions feature in the Flipper Cloud project in the Fewer & Faster organization. Yep, we use Flipper::Cloud for Cloud. Cloud-ception.

🐬Get it while its hot! https://t.co/CZawrxidkQ

— John Nunemaker (@jnunemaker) January 11, 2021

My favorite features: personal environments (with prod mirroring), audit log rollbacks, and autocomplete for actors/groups.

If you've been wanting to try out @flipper_cloud, head on over and sign up!

Finally, January 11, 2021, Flipper Cloud was launched.

So how is it going? Honestly, pretty great. Outside of opening the doors and a few emails, we haven't done any marketing, but people are signing up and paying. Time to start getting the word out!

Want more? Head on over to our getting started guide or check our our demo rails app with Cloud integrated.

If you are curious, migrating from open source Flipper is a few lines of code. We also have several examples of how to use Flipper::Cloud, webhooks and migrate from another adapter.

Feature flags have changed the way I build and release software. Regardless of your app size or development process, feature flags are beneficial. Often, I think people assume that flags are just for large companies, but I'd say no.

Box Out is two developers and I don't even know what we'd do if we didn't have flags. Same goes for Fewer & Faster. I'm so use to reaching for Flipper when I start anything new that I'd be lost without it.

I want the same for you. I really want Flipper Cloud to be valuable for you. If you find anything missing or have any great ideas, please reach out.

To anyone curious, I'd be happy to email, chat, zoom, demo or even remote pair with you to setup Flipper Cloud, so you can see first hand how it would work for you. I mean it. Don't be shy.